This page may be low-quality. Links to it have been removed.

Some broad sources:

- Towards best practices in AGI safety and governance (Schuett et al. 2023)

- Three lines of defense against risks from AI (Schuett 2022)

- The Malicious Use of Artificial Intelligence: Forecasting, Prevention, and Mitigation (Brundage et al. 2018) (recommendations)

- Frontier AI Regulation: Managing Emerging Risks to Public Safety (Anderljung et al. 2023)

- Managing AI Risks in an Era of Rapid Progress (Bengio et al. 2023)

- Emerging processes for frontier AI safety (UK Department for Science, Innovation & Technology 2023)

- AI Risk-Management Standards Profile for General-Purpose AI Systems (GPAIS) and Foundation Models (Barrett et al. 2023)

- Toward Trustworthy AI Development: Mechanisms for Supporting Verifiable Claims (Brundage et al. 2020) (recommendations) and Filling gaps in trustworthy development of AI (Avin et al. 2021)

Some sources on specific topics:

- Responsible Scaling Policies (METR 2023)

- Model evaluation for extreme risks (Shevlane et al. 2023) (paper)

- Structured Access (Shevlane 2022) (paper)

- Open-Sourcing Highly Capable Foundation Models (Seger et al. 2023)

- Deployment corrections: An incident response framework for frontier AI models (O’Brien et al. 2023)

- Securing AI Model Weights (Nevo 2023)

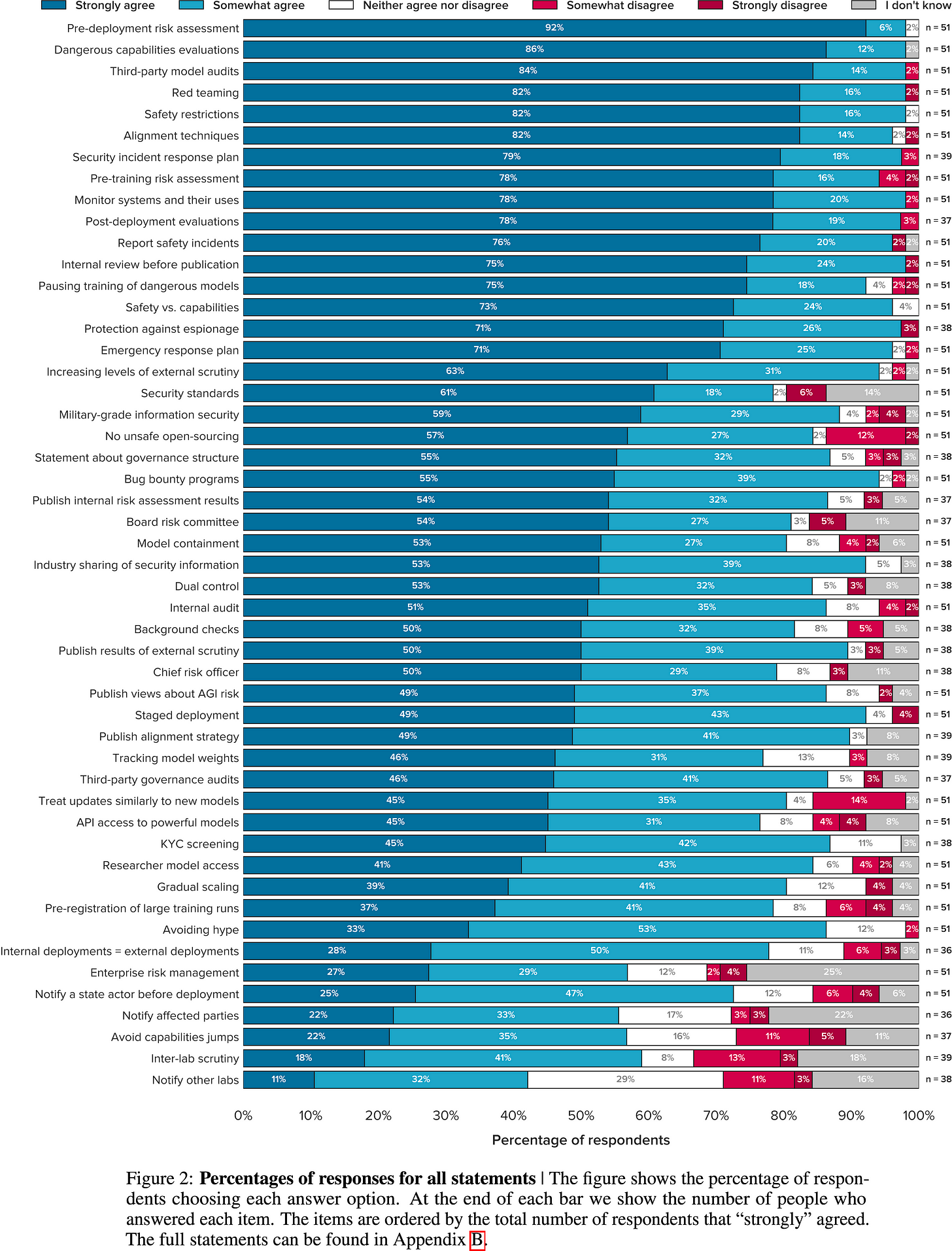

Figure from Towards best practices in AGI safety and governance (Schuett et al. 2023), an expert survey on safety practices for labs: